About our wordmark

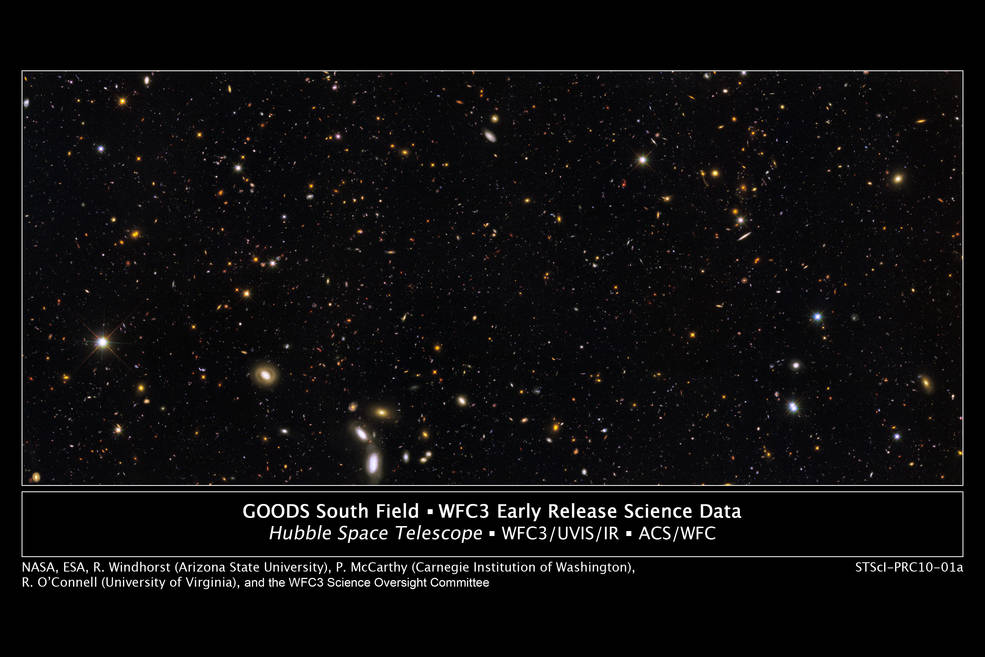

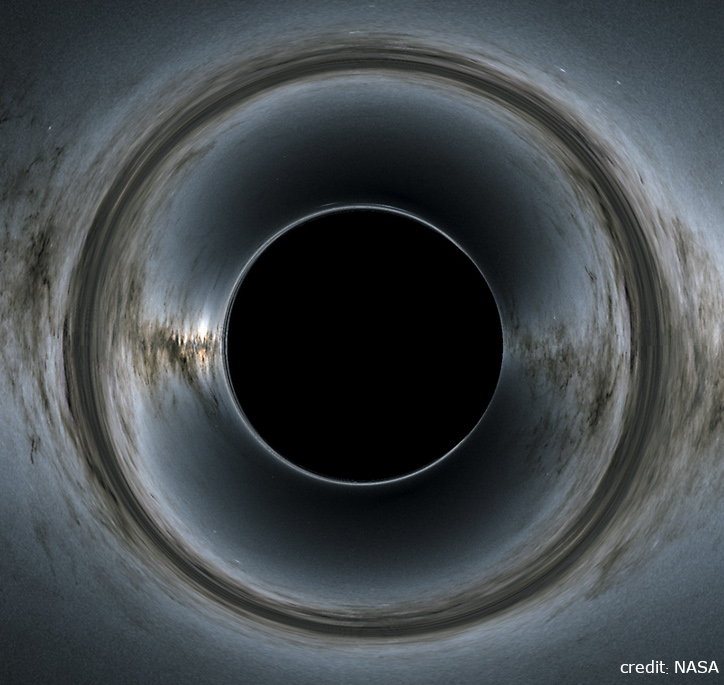

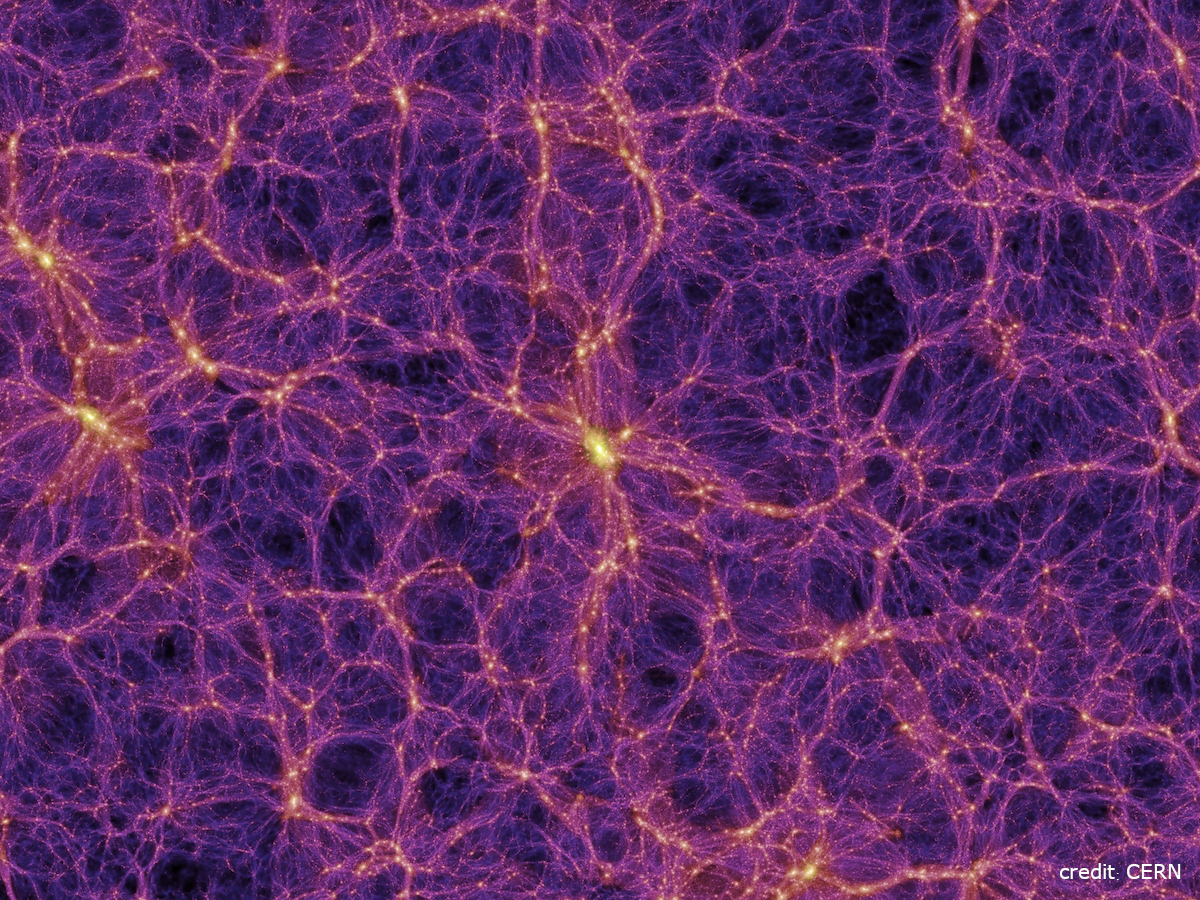

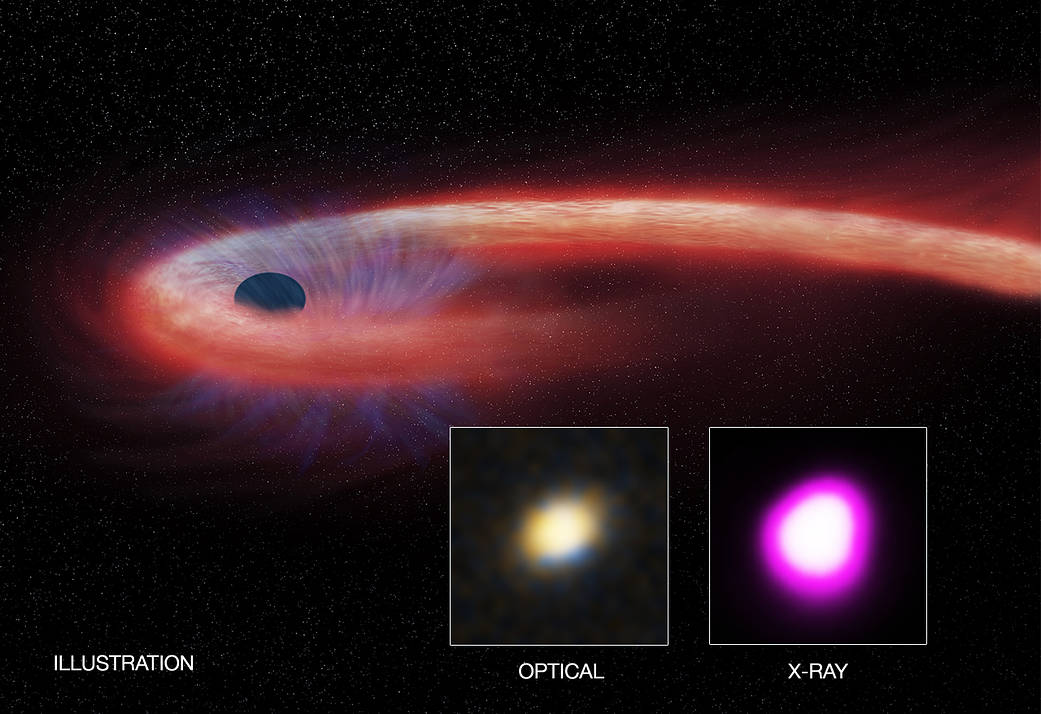

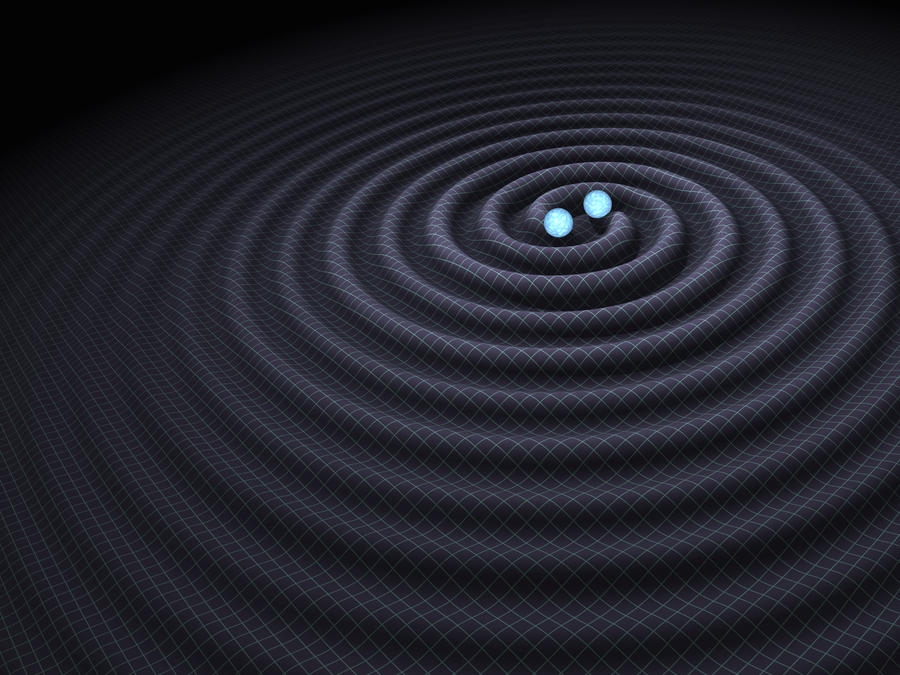

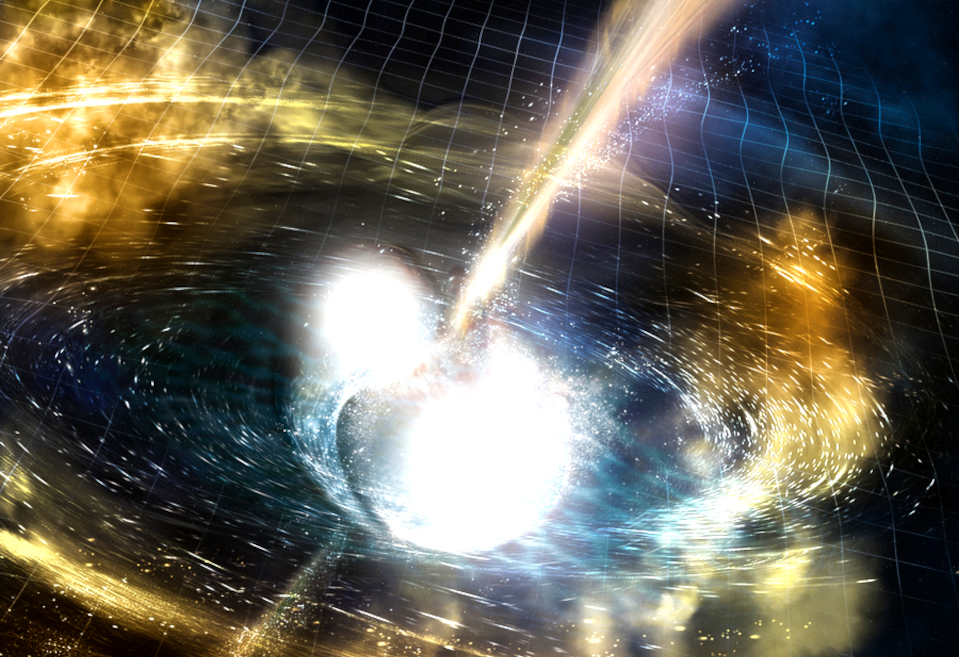

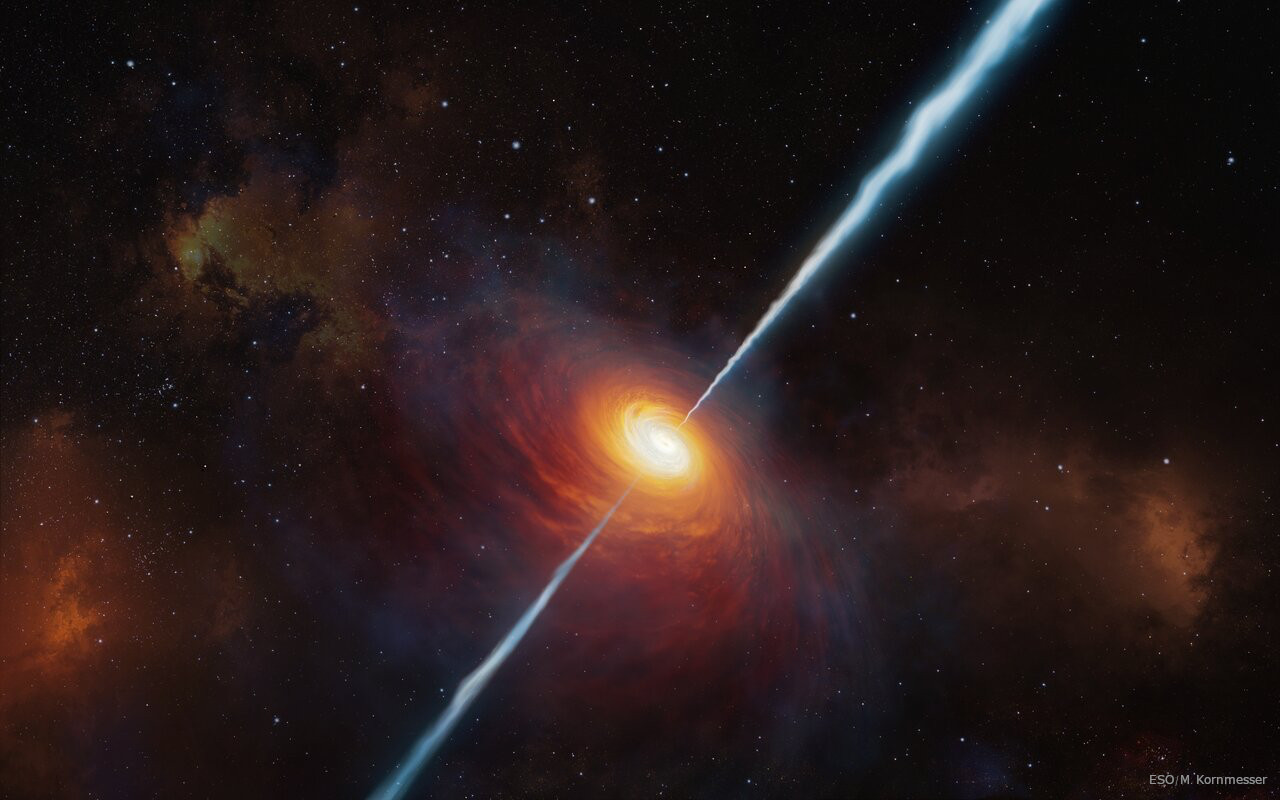

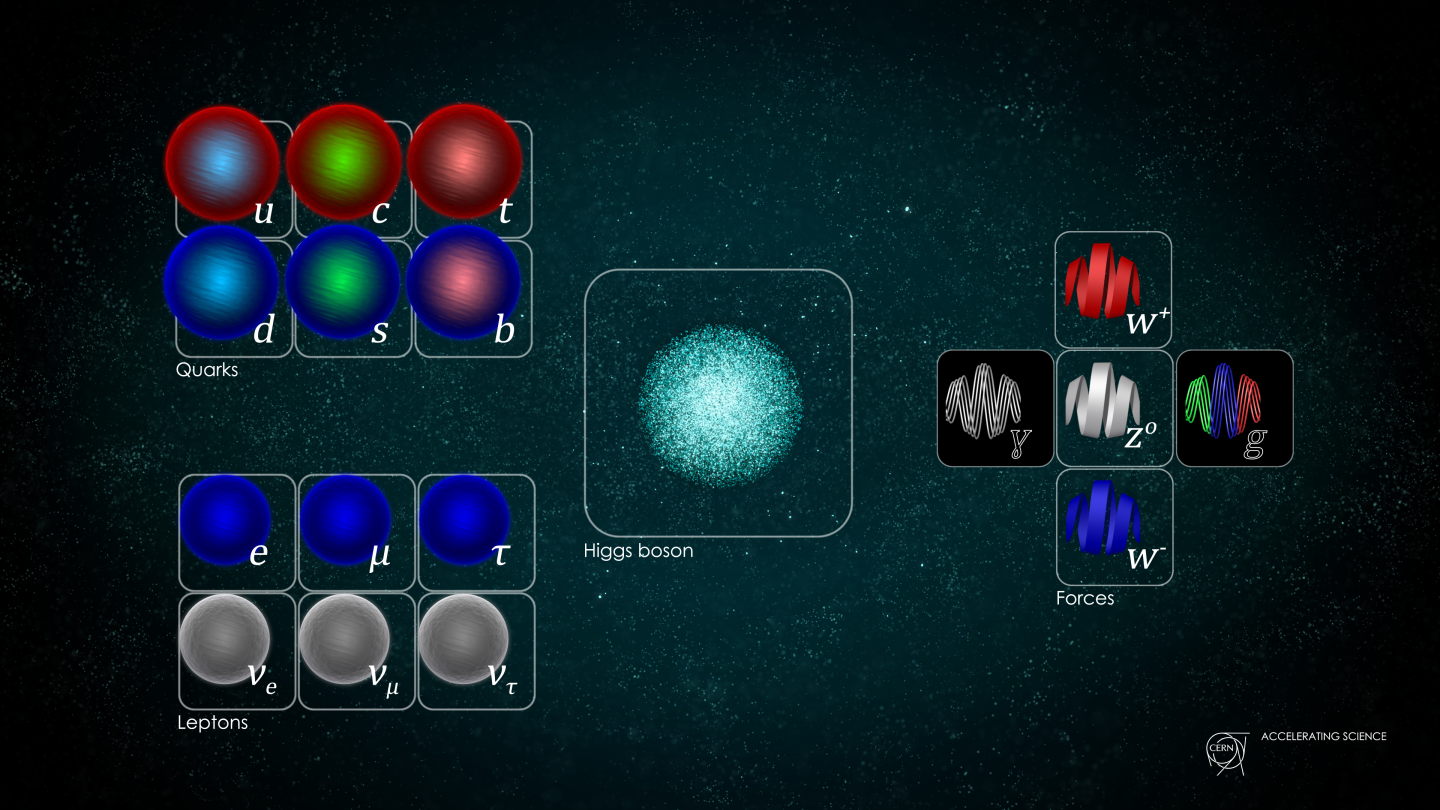

The wordmark symbolizes the scope and variety of research at the IGC. The base of the image represents quantum gravity, evoking the quantum geometrical picture from spinfoams and loop quantum gravity. These are among the approaches to fundamental questions studied at the Center for Fundamental Theory. The middle of the image represents the Center for Theoretical and Observational Cosmology by galaxies embedded in a smooth surface, characteristic of spacetime in general relativity and the much larger physical scales studied in cosmology. Finally, at the top, the surface curves to an extreme, representing a supermassive black hole accompanied by an energetic jet. These elements depict an active galactic nucleus, inspired by Centaurus A. Just to the right, a pair of black holes approaches merger. This top portion of the wordmark represents the Center for Multimessenger Astrophysics, which specializes in the study of high-energy phenomena in the universe.